UX Auditing

Building a scalable research practice

TL;DR

Role: Product Design Manager

Collaborators: Two senior designers on my team

Scope: AI-assisted grading, written response assessment, question authoring, and lab simulation tools within a higher education courseware platform serving millions of instructors and students

Focus: Building a repeatable UX audit practice from scratch and translating findings into executive-level strategic narrative

Impact: Created a scalable audit template now used across multiple tools, directly informed the Product Strategy North Star, and surfaced voice of customer now influencing roadmap decisions and semester planning

The Problem

We had a strategic vision but no systematic way to evaluate our tools against real user needs. No audit process existed and the designers on my team had never conducted a UX audit before.

We had access to customer events, NPS data, and user feedback. Without a structured process, those signals were difficult to prioritize and even harder to translate into recommendations we could defend in front of product and engineering leadership. We needed a way to collect, organize, and tell the story of what we were hearing.

An instructor testing one of our tools at customer event

What I Did

I built the audit practice from the ground up, defining the framework, coaching the team through execution, and synthesizing their findings into a strategic narrative that could move stakeholders.

Two senior designers on my team conducted the first two audits across three tools. Neither had done a UX audit before. I reviewed their work regularly, coaching toward sharper pain point articulation, jobs-to-be-done framing, and theme identification.

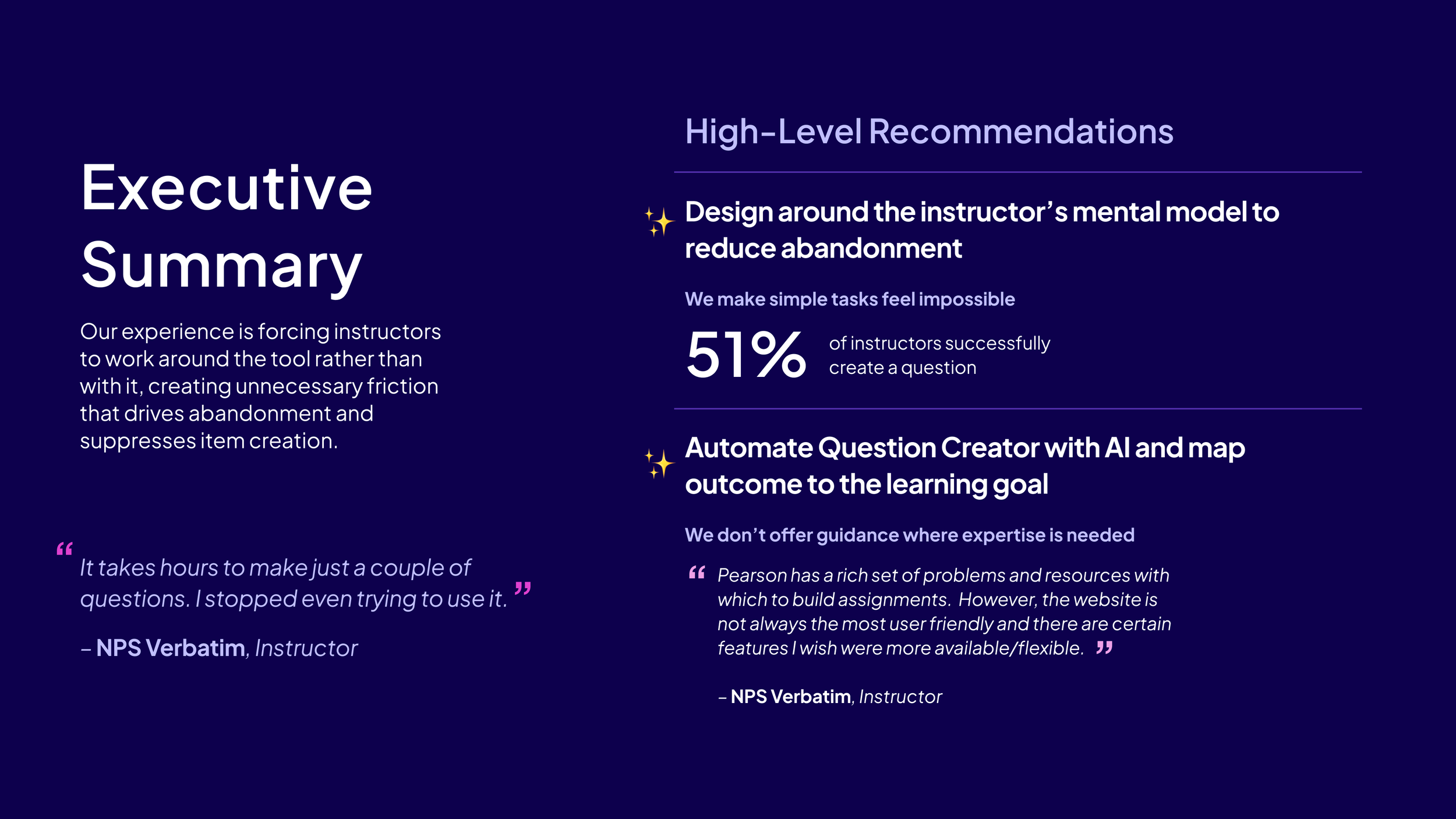

I then structured their raw findings into executive-ready presentations, connecting individual tool problems to the broader strategic opportunity and framing recommendations in terms product and engineering could act on.

“If I had AI efficiencies for authentic assessment I wouldn’t assign multiple choice. I don’t want to teach that way — I just don’t have time to really grade the type of work I want.”

What Came From It

A repeatable practice: The first two audits became the foundation and template for all future tool audits. The lab simulation audit followed the same structure. The process is now a living document used for onboarding new team members and supporting transitions.

Strategic influence: Audit findings directly informed the Product Strategy North Star vision. They are now referenced in semester planning reviews as a structured voice of customer input and are actively influencing roadmap prioritization across product and engineering.

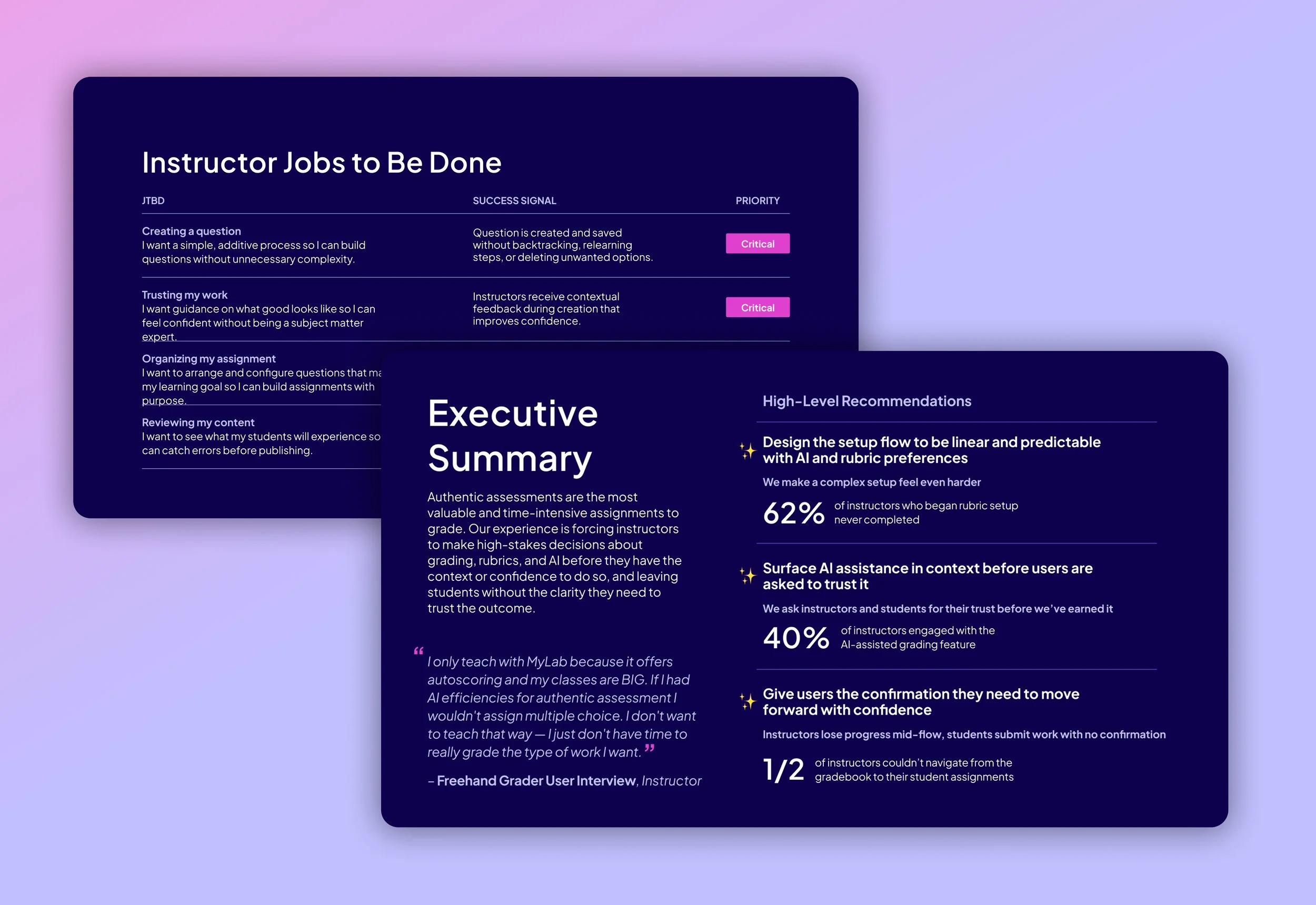

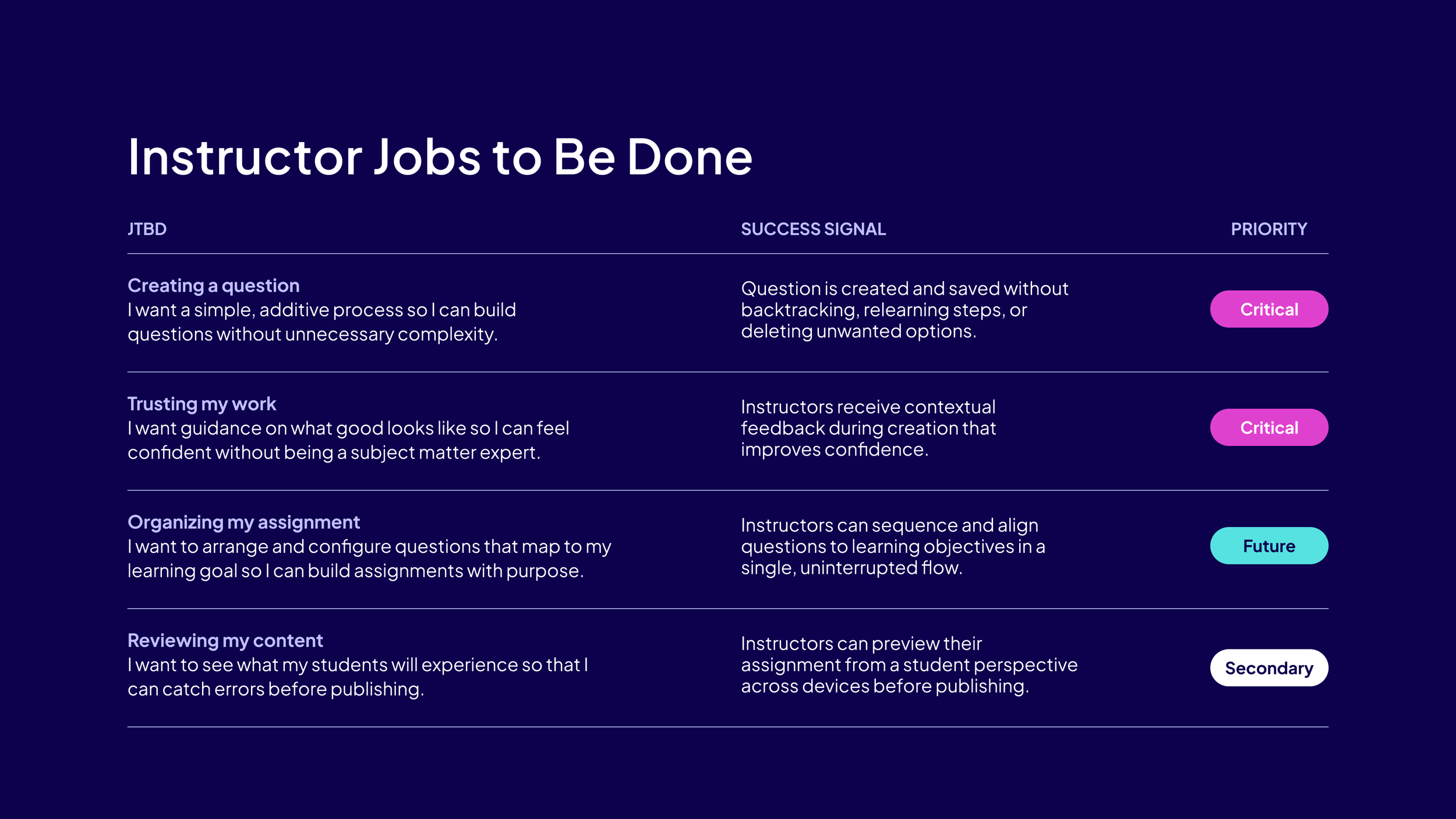

The JTBD framework that became the standard template across all audits

Outcomes

Audit template now used across multiple tools with more planned

Serves as a living onboarding and transition resource for the team

Findings directly informed the Product Strategy North Star vision

Referenced in semester planning reviews as structured voice of customer

Actively influencing roadmap decisions across product and engineering

Key Insights

Research needs a story. Raw findings don't move stakeholders. Structuring audit outputs into a coherent narrative was as important as the research itself.

Voice of customer changes the conversation. Grounding design recommendations in real user friction made them significantly harder to deprioritize.

Capability over deliverable. The goal was never one audit. Building a repeatable process the team could own and scale was the real outcome.

Voice of customer changes the conversation. Grounding design recommendations in real user friction made them significantly harder to deprioritize.